ISI MSTAT PSB 2011 Problem 4 | Digging deep into Multivariate Normal

Join Trial or Access Free Resources

Join Trial or Access Free Resources Join Trial or Access Free Resources

Join Trial or Access Free ResourcesThis is an interesting problem from ISI MSTAT PSB 2011 Problem 4 that tests the student's knowledge of how he visualizes the normal distribution in higher dimensions.

Suppose that \( X_1,X_2,... \) are independent and identically distributed \(d\) dimensional normal random vectors. Consider a fixed \( x_0 \in \mathbb{R}^d \) and for \(i=1,2,...,\) define \(D_i = \| X_i - x_0 \| \), the Euclidean distance between \( X_i \) and \(x_0\). Show that for every \( \epsilon > 0 \), \(P[\min_{1 \le i \le n} D_i > \epsilon] \rightarrow 0 \) as \( n \rightarrow \infty \)

First of all, see that \( P(\min_{1 \le i \le n} D_i > \epsilon)=P(D_i > \epsilon)^n \) (Verify yourself!)

But, apparently we are more interested in the event \( \{D_i < \epsilon \} \).

Let me elaborate why this makes sense!

Let \( \phi \) denote the \( d \) dimensional Gaussian density, and let \( B(x_0, \epsilon) \) be the Euclidean ball around \( x_0 \) of radius \( \epsilon \) . Note that \( \{D_i < \epsilon\} \) is the event that the gaussian \( X_i \) will land in this Euclidean ball.

So, if we can show that this event has positive probability for any given $x_0, \epsilon$ pair, we will be done, since then in the limit, we will be exponentiating a number strictly less than 1 by a quantity that is growing larger and larger.

In particular, we have that : \( P(D_i < \epsilon)= \int_{B(x_0, \epsilon)} \phi(x) dx \geq |B(x_0, \epsilon)| \inf_{x \in B(x_0, \epsilon)} \phi(x) \) , and we know that by rotational symmetry and as Gaussians decay as we move away from the centre, this infimum exists and is given by \( \phi(x_0 + \epsilon \frac{x_0}{||x_0||}) \) . (To see that this is indeed a lower bound, note that \( B(x_0, \epsilon) \subset B(0, \epsilon + ||x_0||) \).

So, basically what we have shown here is that exists a \( \delta > 0 \) such that \( P(D_i < \epsilon )>\delta \).

As, \( \delta \) is a lower bound of a probability , hence it a fraction strictly below 1.

Thus, we have \( \lim_{n \rightarrow \infty} P(D_i > \epsilon)^n \leq \lim_{n \rightarrow \infty} (1-\delta)^n = 0 \).

Hence we are done.

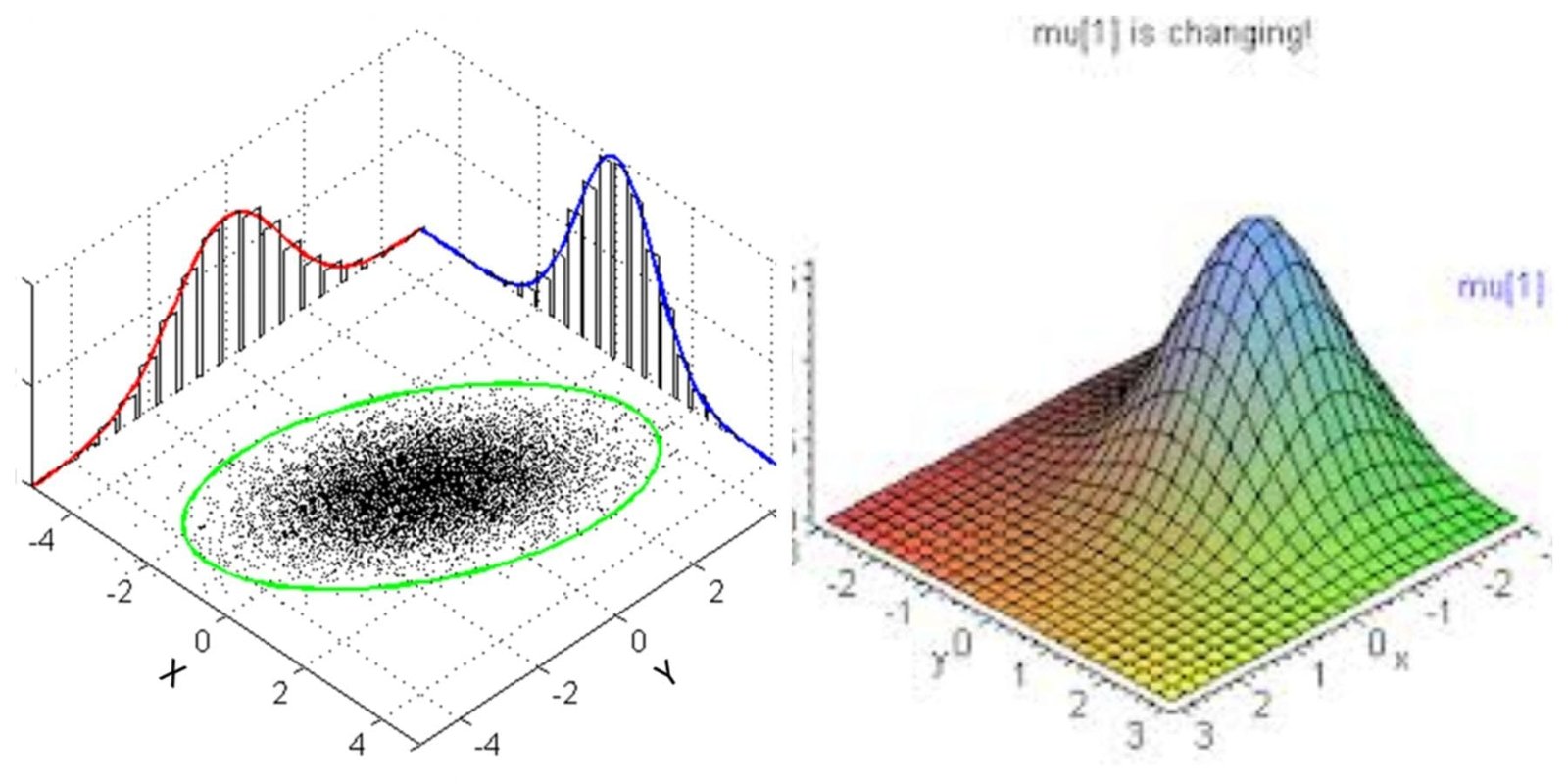

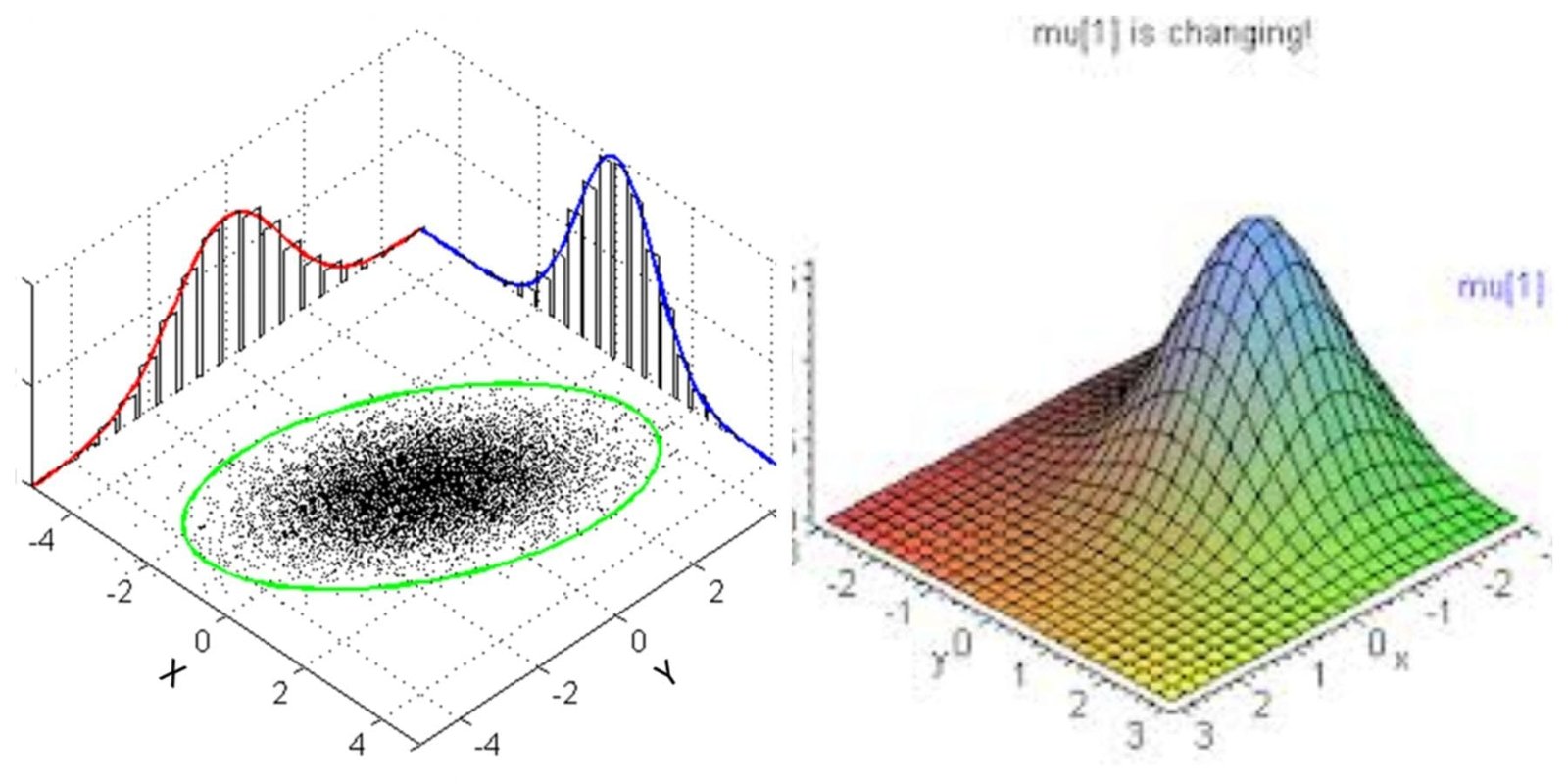

There is a fantastic amount of statistical literature on the equi-density contours of a multivariate Gaussian distribution .

Try to visualize them for non singular and a singular Gaussian distribution separately. They are covered extensively in the books of Kotz and Anderson. Do give it a read!

Anderson has several books and two of them are multivariate and elliptical countours. Would you like to say which one do you mention?

I mean the book An Introduction To Multivariate Statistics